From laptop to hospital: how do we apply AI in practice?

Wynand Alkema talks data science- Research stories

There is no getting around it these days: AI is going to change our lives - and jobs. That includes healthcare, diagnostics, medication and nutrition. But how will this take shape? To understand this we talked to Wynand Alkema, professor Data Science for Life Sciences and Health. His advice? "You should always have industry-related insight and engage with people from the field: what are they looking for, what is their day-to-day like, what issues do you encounter? During these conversations, you should keep potential data solutions in the back of your mind, and combine the two worlds."

How does AI research make the move from laptop to hospital? Wynand will explain. But...first the basics:

For some it is the ultimate solution, for others the end of humanity. But what exactly is AI? "It is an approximation of how a human thinks, but by a machine. It recreates thought processes, such as combining facts and drawing conclusions as a human would." Just like humans, AI learns from previous experiences. Humans recognise patterns: every time A happens, B happens too. "Well, this is actually what artificial intelligence does too," he says.

These experiences are called inputs in the context of AI. After a while, an AI model can predict consequences based on input - we call these consequences output. The more examples you give to such a model, the better it learns (just like a human). This is called training the model. This is done by a data scientist. A data scientist makes sure the model gets the right inputs, and analyses the outputs. "It is like when you are teaching a child or a student something. As a teacher, you are making sure they get the right educational material, and then you start testing whether it has all been understood correctly."

Poor or incomplete teaching also means poor results. In fact, artificial intelligence fails in the same way that human intelligence fails; it copies our blind spots and biases. "Thus, there are many examples where, often also due to ignorance, the wrong input data is used or the wrong output data, and you get a model that is not suitable for the intended purpose. The outputs generated by these models are then used to train other models, so you get an amplifying effect of the error that crept in at the beginning."

In his professorship Data Science for Life Sciences and Health, Wynand Alkema focuses on data solutions for challenges in life science industries. Examples include healthcare, medication, and diagnostics, but also nutrition. But how does this actually work? How can a computer system or AI algorithm help a doctor recognise symptoms, or develop healthy diets?

"You use the same techniques, but the predictions you want to make are done in the field of life sciences. You can now answer questions like: 'is this drug appropriate for this patient?' or 'when I see this blood count of a patient is that an indication that he is getting sicker or actually on the mend?' or 'would this new ingredient work in this recipe'?"

Let's take medical issues as an example. AI uses large amounts of data to recognise patterns and make connections that are impossible for the human brain to grasp. It can, for example, analyse data on disease patterns from thousands of patients with the same type of cancer. "There are databases with thousands of measurements from patients with a certain type of cancer as well as healthy people. You can compare these two groups and, very simply put, ask 'which piece of input always results in the output of a cancer diagnosis?'" With AI, we can quickly process all this data to draw conclusions. This can lead to the development of new diagnostic tests and personalised treatments. But there are also pitfalls: how do we ensure the quality of the data, how is this data captured, and is the metadata (information about the characteristics of the data) complete? In many cases, researchers have to use public data, or generate data themselves.

Generating big data is complicated with humans, but this becomes simpler when you work with other organisms, such as bacteria. For example, Wynand collaborates with professor Janneke Krooneman. Among other things, Janneke is researching the production and degradation of the biopolymer PHA by bacteria. How can you use AI for research on bacteria? "Suppose you have some bacteria that break down bioplastics, and some bacteria that don't. With AI, you can measure and compare thousands of characteristics of these bacteria - manually, it is impossible to examine which factors correlate with plastic degradation and which do not." Wynand's research supports the research of Janneke and other researchers at the knowledge centre by making predictions: "This allows us to feed researchers working here at the lab with the activities that have a higher chance of success than those that have a lower chance of success. This means fewer experiments that fail and less waste, as well as faster implementation of research results in practice."

The possibilities of AI are endless and seem promising, but where do you start? According to Wynand, you have to start with the practical problem: "You start with the question: what is the problem I want to solve? For example, if we are talking about a company: what is the point where the production process is failing?" Once you have found the problem, you can move to the next step: what data do I need to find a solution to this problem?"

For example: a water supply company wants to predict where their pumps will break down first. With this knowledge, they can focus their maintenance on the weakest link. To solve this issue, you have information on all factors: data on the pumps, data on when the pumps are used the most, where the pumps have broken down before, etc. If you don't have enough input data (information about the pumps and the environment) or output data (information about the consequences for the pumps such as leakage or damage), then you can't train a model. Then there is the business case to consider. How much does this model cost? What will it yield if this problem is solved?

If your business case is solid, how will you apply it in practice? Will employees or customers use the model? Not every model is a success. Wynand: "Of the 3,000 models there are in the scientific domain for diagnosing a patient, only one survives in the clinic." All other models do not fit the reality of the workplace.

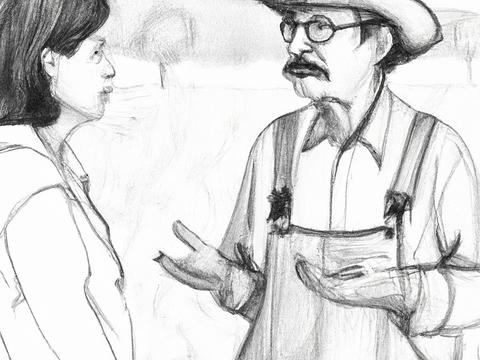

Therefore, Wynand's advice is to work with the professional throughout the process: "You have to stand next to the doctor, next to that farmer, or next to that nurse, and say, 'how do you work? What does your computer screen look like? How does your process work? If I can do something for you, where in that process can I help you?'" This facilitates acceptance; after all, the solution was developed together with the professional. In other words:

AI is already present in our daily lives. Think of the algorithms in your social media apps that analyse your usage and recommend new content. Language models, such as ChatGPT, have also been around for a while now. What distinguishes the recent wave of AI applications is that it is generative: it creates new content. In addition, it has now been made very easy and accessible, by building a good interface around it. "For people in the field, it wasn't new. Yet you were immediately confronted with how powerful it is. I was surprised by that too." The convenience of ChatGPT underlines how important the customer or user is when developing AI.

What other developments can we expect? "Where will it go? I really don't dare say." What is clear, however, is that things are moving fast, making many students and professionals nervous about the future of their jobs. If you want to ride the AI wave, how do you tackle it? Where do you focus on? And can you even compete with the Silicon Valley giants? "In the future, we need to look more for the niches," Wynand explains.

"We need to look at what is already possible, what is already there, and what the need of the customer or user is, whether it is a farmer, a doctor, or a software developer. We need to commit to speaking two languages. The mathematics of data, and the language of the user, getting to the bottom of the customer's need." That is where you can add value, and that is what Wynand's research group is focusing on. You have to be interested in the processes you want to improve. That includes industry insight. That's why we [in our research group] don't actually do AI for energy management or for web shops or that world. We do AI for life sciences - and that is already very broad."

What the future will bring is unclear. What is clear is that data scientists need to speak two languages: data language and user language. With this skill, they can not only ensure good implementation of AI, but they can also influence how AI is applied. For example, they can join conversations about ethical issues, misinformation or security. They can shed more light on what AI can and cannot do. Finally, they can be an ambassador for responsible use of AI (for example, in the context of AI's climate impact, as training models is an energy-guzzling process). Like any tool, AI can be used for the good of society. In other words, it is up to the data scientists.

Data Science for Life Sciences and Health falls under the professorship Biobased Ingredients. This professorship researches and develops sustainable food and ingredients in a biobased economy.

Read moreHow satisfied are you with the information on this page?